What was once the realm of speculative science fiction—fleets of driverless vehicles navigating complex urban grids without human intervention—is rapidly transitioning into an industrial-scale reality. The catalyst for this shift is not merely a single breakthrough in software, but a comprehensive convergence of massive computational power and sophisticated sensor integration. At the center of this evolution sits NVIDIA Hyperion 10, the latest iteration of a production platform designed to be the foundational architecture for Level 4 autonomous mobility.

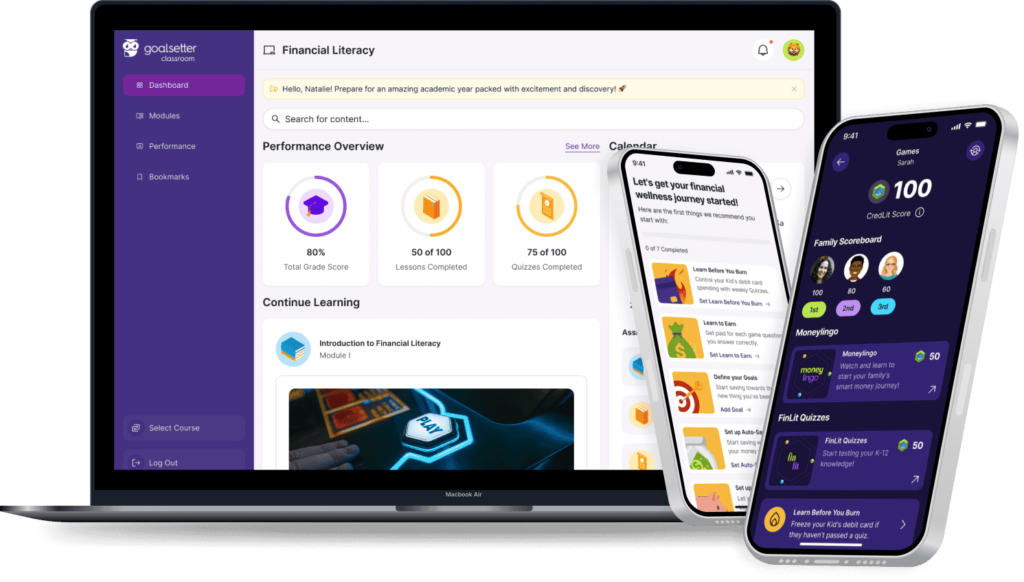

With a definitive production timeline targeting 2027, NVIDIA has signaled a strategic pivot from experimental pilot programs to global deployment. Through a high-profile partnership with Uber, the goal is to manufacture and deploy 100,000 self-driving taxis, utilizing Uber’s immense repository of real-world driving data to refine the AI’s decision-making capabilities. Hyperion 10 is not just a hardware kit; it is a mobile supercomputer integrated with a 36-sensor suite and a safety-certified operating system, purpose-built to handle the "computational tax" required for truly autonomous operation.

The Brain: DRIVE AGX Thor and Blackwell Architecture

The core of the Hyperion 10 platform is the DRIVE AGX Thor system-on-a-chip (SoC). To understand the leap Thor represents, one must look at its underlying Blackwell architecture. Traditionally, autonomous driving systems struggled with "corner cases"—rare, unpredictable events that fixed algorithms couldn't process. Thor addresses this by introducing a dedicated transformer engine optimized for generative AI and Vision Language Action (VLA) models.

Each DRIVE AGX Thor chip delivers over 2,000 FP4 teraflops (or 1,000 TOPS of INT8) of real-time compute. In a Level 4 robotaxi configuration, NVIDIA utilizes a dual-platform setup, providing a combined processing power exceeding 4,000 FP4 teraflops. This massive headroom allows the vehicle to run multiple redundant AI models simultaneously, ensuring that if one sub-system experiences a delay or error, the secondary system maintains control.

This transition to the Blackwell architecture is critical for the next generation of "reasoning-based" AI. Unlike earlier versions that relied on pattern recognition (matching a visual input to a database of known objects), Thor-powered systems can reason through a scene. If a ball rolls into the street, the AI understands the causal likelihood of a child following it, adjusting its braking profile before the child is even visible.

The Eyes: A 360-Degree Multimodal Sensor Suite

For an AI to make high-stakes decisions, its data input must be flawless. Hyperion 10 moves away from the vision-only philosophy favored by some competitors, instead opting for a "defense-in-depth" multimodal sensor strategy. This provides 360-degree perception and triple-layer redundancy across various environmental conditions.

The standard Hyperion 10 configuration integrates a staggering 36 sensors:

- 14 High-Definition Cameras: Providing 360-degree visual coverage with high dynamic range for low-light and high-glare scenarios.

- 9 Radars: Essential for measuring the velocity of surrounding objects, particularly in heavy rain or fog where cameras may struggle.

- 12 Ultrasonic Sensors: Used for short-range proximity detection, crucial for tight urban maneuvers and parking.

- 1 High-Performance Lidar: Sourced from industry leader Hesai, this long-range lidar acts as the ultimate ground-truth sensor.

Tech Spotlight: Hesai Ultra-Long-Range Lidar The inclusion of Hesai’s lidar technology is a cornerstone of the Hyperion 10 safety profile. Unlike cameras, lidar provides precise 3D spatial mapping regardless of ambient lighting. By reflecting laser pulses off objects, it creates a high-resolution "point cloud" of the environment, allowing the vehicle to detect obstacles up to 250 meters away with centimeter-level accuracy.

The integration of these disparate data streams is handled by the DRIVE OS, which synchronizes the inputs to create a unified, real-time world model. This ensures that the AI isn't just seeing a "picture" of the road, but is operating within a mathematically precise 3D environment.

Evolutionary Leap: Hyperion 8 vs. Hyperion 10

To appreciate the scale of innovation, we must compare the outgoing Hyperion 8 architecture—currently found in many advanced driver-assistance systems (ADAS)—with the upcoming Hyperion 10.

| Specification | NVIDIA Hyperion 8 | NVIDIA Hyperion 10 |

|---|---|---|

| Primary SoC | DRIVE Orin | DRIVE AGX Thor (Blackwell) |

| Compute Power | 254 TOPS (INT8) | 2,000+ TFLOPS (FP4) |

| Architecture | Ampere | Blackwell |

| AI Support | Deep Learning / CNN | Generative AI / VLA Models |

| Lidar Integration | Optional / Variable | Standard (Hesai Long-Range) |

| Target Autonomy | Level 2+ / Level 3 | Level 4 (Robotaxis / Freight) |

| Energy Efficiency | High | Ultra-High (8-bit Floating Point) |

The Soul: Alpamayo and the Cosmos Platform

Hardware is only as capable as the models it runs. To power the 100,000-strong fleet planned with Uber, NVIDIA is deploying its "Alpamayo" AI model. This is a reasoning-based architecture that moves beyond simple trajectory prediction.

The Alpamayo model is trained using the NVIDIA Cosmos world foundation model. Think of Cosmos as a massive, physics-compliant simulator. By feeding Uber’s millions of miles of real-world driving data into Cosmos, NVIDIA can "hallucinate" millions of dangerous edge-case scenarios—such as a vehicle hydroplaning or a multi-car pileup—allowing the AI to practice its response in a digital twin environment before ever hitting the pavement.

This symbiotic relationship with Uber is the "secret sauce" of the Hyperion 10 ecosystem. Uber provides the data and the commercial network; NVIDIA provides the computational backbone. By 2027, this cycle of real-world data and synthetic simulation is expected to produce a driving intelligence that statistically far exceeds human performance.

Industry Impact: The Level 4 Ecosystem

While the Uber partnership is the most visible application, the Hyperion 10 architecture is designed as an open, "Halos Certified" platform. This means it is a standardized set of hardware and software that other manufacturers can adopt to accelerate their own autonomous programs.

Key adopters across the industry highlight the platform’s versatility:

- Passenger Luxury: Lucid and Mercedes-Benz are utilizing elements of the DRIVE platform to transition from Level 3 highway piloting to Level 4 urban autonomy.

- Long-Haul Freight: Companies like Volvo and the startup Waabi are integrating Hyperion components into autonomous trucks, where the need for long-range sensing (the Hesai Lidar) and massive compute is even more acute due to the braking distances of heavy loads.

- The Halos Program: This certification ensures that all third-party components—from sensors to networking cables—meet NVIDIA’s rigorous standards for AI safety and cybersecurity, preventing hackers from interfering with vehicle systems.

Strategic Comparison: NVIDIA Hyperion vs. Tesla FSD

In the world of autonomous driving, two distinct philosophies have emerged: Tesla’s vision-only, vertically integrated approach and NVIDIA’s sensor-fusion, open-ecosystem approach.

Tesla’s Full Self-Driving (FSD) relies on massive data scaling from its existing customer fleet, using only cameras. This keeps vehicle costs low but places a massive burden on the AI to "guess" depth and velocity from 2D images.

NVIDIA’s Hyperion 10 takes the opposite tack. By including Lidar and Radar, NVIDIA provides the AI with "ground truth" data. While this makes the initial hardware stack more expensive, it significantly lowers the "uncertainty threshold" for Level 4 operation. For a robotaxi operator like Uber, the cost of the sensor suite is offset by the removal of the human driver and the increased safety profile, which reduces insurance and liability costs. Furthermore, NVIDIA’s open ecosystem allows for a diverse range of vehicle types, whereas Tesla’s software is locked to its own hardware.

FAQ

How does NVIDIA Hyperion 10 handle inclement weather? Hyperion 10 utilizes a multimodal sensor suite. While cameras might be obscured by heavy rain or snow, the 9 onboard radars and the Hesai lidar can "see" through precipitation by measuring wave reflections and laser pulses, allowing the vehicle to maintain a 360-degree map of its surroundings in low-visibility conditions.

When will the 100,000 NVIDIA-Uber robotaxis be available? The partnership aims for a phased deployment starting in 2027. The initial rollout will likely occur in geo-fenced urban environments where high-definition mapping is already complete, expanding globally as the AI matures through continuous data feedback.

Can Hyperion 10 be retrofitted into existing cars? No. Hyperion 10 is a "production platform," meaning it must be integrated into the vehicle's electrical and mechanical architecture during the manufacturing process. It requires specific cooling for the DRIVE AGX Thor chips and precise mounting points for the 36-sensor suite.

As we look toward 2027, the arrival of Hyperion 10 marks the end of the "experimental" era of autonomous driving. We are moving into an era of industrial reliability, where the "driver" is a distributed network of Blackwell-powered supercomputers. For the travel industry and urban planners alike, the implications are profound: a world where mobility is a service, safety is a mathematical certainty, and the steering wheel becomes a relic of the past.