Quick Facts

- Logistical Advantage: 10-day Moon launch windows compared to the 26-month wait cycles required for Mars missions.

- Thermal Efficiency: Direct utilization of vacuum cooling to solve the 1,000W per chip Thermal Wall limitation currently facing high-end GPUs.

- Energy Source: 24/7 sustainable energy provided by photovoltaic energy arrays positioned at the lunar Peaks of Eternal Light.

- Market Scale: The orbital and lunar data center sector is projected to reach a valuation of $15-20 billion by 2030.

- Key Players: A collaborative ai infrastructure ecosystem led by SpaceX and xAI, with hardware integration from NVIDIA.

- Direct Answer: The future of ai infrastructure involves moving massive compute workloads to the Moon to leverage vacuum cooling and abundant solar power, bypassing the energy and thermal limits of Earth.

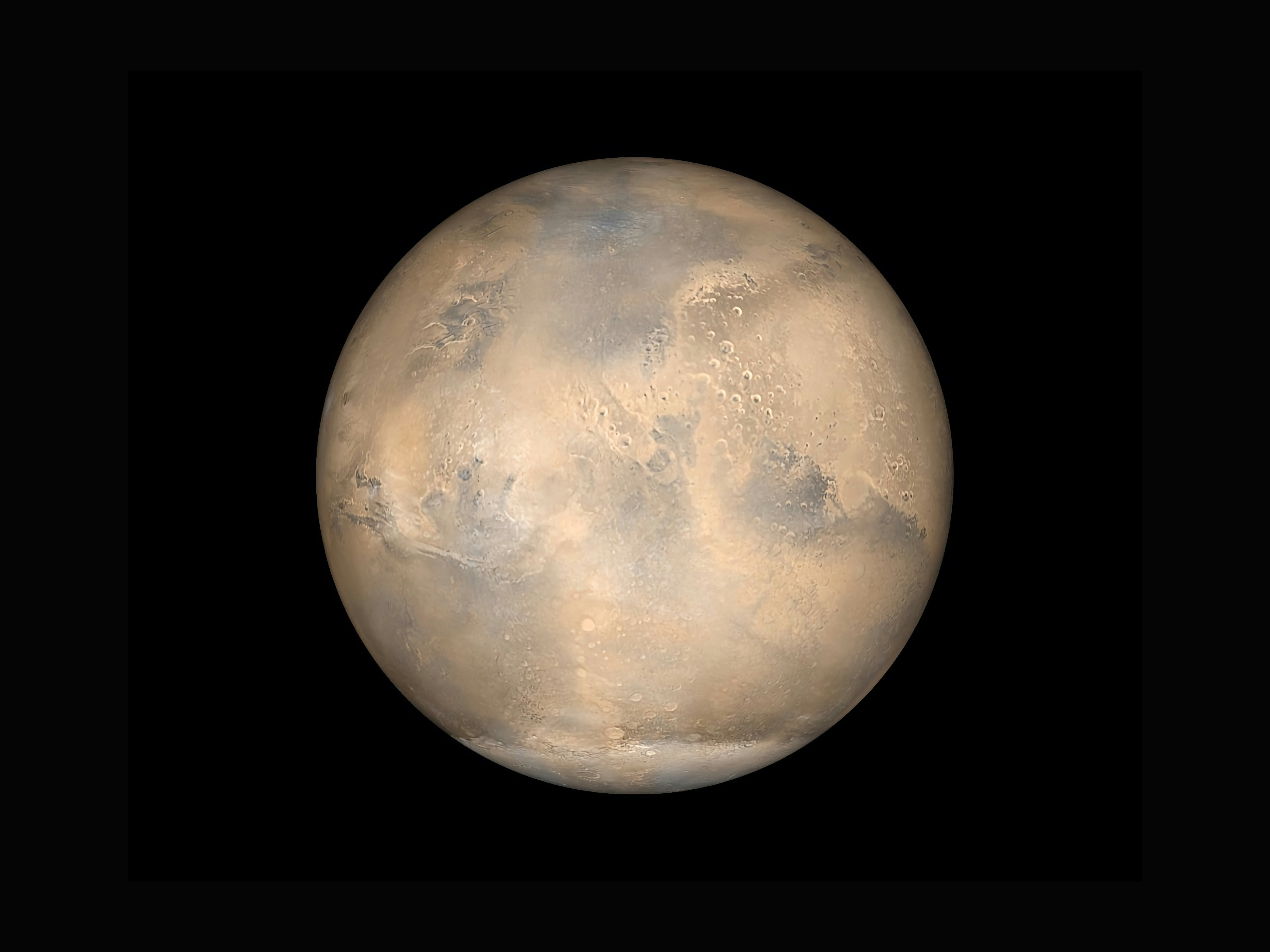

Moving ai infrastructure to the Moon offers unique advantages in thermal management and power availability. The vacuum of space provides an ideal environment for vacuum-cooled server racks, while extensive solar arrays offer a sustainable energy source for high-density compute. Additionally, the Moon’s short launch windows and lower gravity facilitate the deployment of orbital compute architectures capable of handling galactic-scale processing demands.

The Earthly Bottleneck: Why Terrestrial Grids Are Failing

As we push toward artificial general intelligence, our planet is running out of the two things AI needs most: electricity and cold air. We are rapidly approaching what engineers call the Power Wall. On Earth, the demand for data center electricity is expected to surge to a staggering 245GW, a figure that traditional power grids are simply not equipped to handle. When an ai infrastructure company attempts to build a new gigawatt-scale cluster, they often face multi-year delays just to secure a grid connection.

Beyond power, the industry is hitting a Thermal Wall. Modern high-performance chips, such as the NVIDIA B200, consume upwards of 1,000W per unit. Dissipating that heat on Earth requires massive liquid cooling systems and complex HVAC architectures that consume even more energy. In the dense atmosphere of our home planet, moving heat away from a silicon die is an uphill battle against thermodynamics.

This physical ceiling is forcing ai infrastructure companies to look upward. While terrestrial facilities struggle with InfiniBand cable lengths and air-side economizers, the vacuum of space offers a radical alternative. By moving the heaviest training and inference workloads off-planet, the ai infrastructure ecosystem can expand without competing for the same electricity that lights our homes and powers our hospitals. The transition from Earth-bound facilities to space-based data centres is becoming a logistical necessity rather than a science-fiction dream.

The Lunar Advantage: Cooling, Power, and Launch Windows

The Moon is not just a destination for exploration; it is the ultimate heat sink. In the near-vacuum of the lunar environment, we can implement vacuum-cooled server racks that radiate heat directly into the 3-Kelvin background of deep space. This solves the thermal management issues that plague terrestrial ai data center infrastructure. Without an atmosphere to trap heat, engineers can pack chips more densely, drastically reducing the physical footprint of the compute cluster.

Energy availability is another primary driver for this shift. By placing photovoltaic energy arrays on the lunar North and South poles—specifically in areas known as the Peaks of Eternal Light—a lunar data center can access nearly continuous sunlight. This provides a constant, sustainable source of power that eliminates the need for the carbon-heavy backup generators used by many terrestrial ai infrastructure examples today.

Furthermore, the Moon provides a much more responsive logistical loop than Mars. While Mars is only accessible every 26 months, the Moon offers launch windows every 10 days. This allows for rapid iteration, hardware upgrades, and maintenance cycles. Below is a comparison of how the Moon stacks up against traditional and deep-space alternatives:

| Feature | Earth Data Centers | Mars Infrastructure | Lunar Infrastructure |

|---|---|---|---|

| Cooling Method | Water/Air (Expensive) | Atmospheric (Limited) | Vacuum Radiation (Ideal) |

| Power Source | Grid/Diesel | Nuclear/Limited Solar | Perpetual Solar Arrays |

| Launch Window | N/A | Every 26 Months | Every 10 Days |

| Latency to Earth | < 50ms | 3 to 22 Minutes | ~1.3 Seconds |

| Gravity Constraint | 1.0g | 0.38g | 0.16g |

To protect sensitive electronics from solar radiation and micrometeorites, developers are turning to Lunar regolith processing. By burying server modules under several meters of lunar soil, or regolith, companies can create highly insulated, radiation-hardened bunkers. This in-situ resource utilization reduces the mass that needs to be launched from Earth, significantly lowering the cost of deploying ai infrastructure for galactic scale computing.

The xAI & SpaceX Synergy: Building the 'Self-Growing City'

Elon Musk’s vision for xAI and SpaceX is not just about rockets; it is about creating a self-sustaining industrial base. Recent filings and public statements suggest that SpaceX has shifted its strategic focus toward establishing a "self-growing city" on the Moon within the next decade. This city will serve as the manufacturing hub for a new generation of AI-compute satellites and orbital nodes.

The logistical linchpin of this strategy is the SpaceX Starship. With its unprecedented payload capacity, Starship can deliver the massive cooling arrays and silicon wafers necessary to build a planetary-scale ai infrastructure ecosystem. Once established, the lunar base would utilize an electromagnetic "mass driver" catapult to launch AI-compute hardware into various orbits with minimal fuel consumption, leveraging the Moon's low gravity and lack of atmosphere.

By integrating Grok’s technical forecasting with SpaceX’s heavy-lift capabilities, xAI is positioning itself as the premier ai infrastructure company for the next century. This synergy allows for a closed-loop system where AI helps design more efficient lunar manufacturing processes, which in turn scales the compute power available to the AI. This off-world expansion ensures that the extreme compute requirements of next-generation models are met without placing further strain on Earth's environment or resources.

Geopolitics and the $1.1 Trillion AI Race

The push for lunar compute is not happening in a vacuum—politically speaking. As the United States and China compete for lunar dominance, the 2026 ai infrastructure market segments are becoming increasingly defined by space-based assets. China has set an ambitious 2030 goal for a lunar research station, prompting a sense of urgency in the Western private sector. The race is no longer just about planting flags; it is about which nation controls the off-world processing power that will drive future economies.

Market analysts now forecast the orbital and lunar data center sector to reach a valuation of $15-20 billion by 2030, with an staggering annual growth rate of 85-90%. This investment is driven by the realization that terrestrial sustainable ai infrastructure energy strategies are reaching their limits. Large-scale investors are moving capital into companies that can provide multi-planetary data redundancy, ensuring that the world's most critical AI models are safe from terrestrial conflicts or grid failures.

As SpaceX’s valuation reflects its role as the sole provider of heavy-lift logistics, the strategic moat around lunar-based compute deepens. By moving intelligence 240,000 miles away, we are creating a resilient foundation for AGI that is independent of any single terrestrial power grid. This shift represents the most significant architectural change in the history of computing, moving from centralized Earth-bound hubs to a distributed, orbital compute architecture.

FAQ

What is an AI infrastructure?

AI infrastructure refers to the integrated stack of hardware and software required to develop, train, and deploy artificial intelligence models. This includes high-performance GPUs or TPUs, high-speed networking like InfiniBand, specialized storage solutions, and the power and cooling systems necessary to keep these components running. As models grow, this infrastructure is increasingly moving toward specialized architectures like the lunar clusters proposed by SpaceX.

What are the five layers of AI infrastructure?

The five layers typically consist of the compute layer (GPUs and processors), the storage layer (high-throughput data lakes), the networking layer (low-latency interconnects), the orchestration layer (software like Kubernetes for managing workloads), and the energy/thermal layer (the power source and cooling systems). The shift to the Moon primarily addresses bottlenecks in the energy and thermal layers while utilizing vacuum for the networking environment.

Who has the best AI infrastructure?

Currently, companies like NVIDIA, Microsoft (through Azure), and Google lead the terrestrial market. However, xAI and SpaceX are quickly becoming the leaders in next-generation off-world infrastructure. By controlling the entire stack from the Starship delivery system to the Grok software and the underlying lunar manufacturing, Musk's companies are building a vertically integrated system that traditional cloud providers may struggle to replicate.

Which 3 jobs will survive AI?

While AI will automate many tasks, roles that require high-level strategic reasoning, complex physical interaction, and deep empathy are the most resilient. Specifically, trades requiring intricate manual dexterity and problem-solving in unpredictable environments (like plumbers or electricians), healthcare professionals who provide emotional support and nuanced bedside care, and creative strategists who direct AI systems to solve novel planetary-scale problems will remain essential.

The transition to a multi-planetary ai infrastructure ecosystem is no longer a matter of 'if,' but 'when.' As we exceed the physical limits of our home planet, the Moon stands ready to host the next great leap in human—and artificial—intelligence. By solving the power and thermal constraints of Earth, we are not just building faster computers; we are ensuring the long-term viability of the digital mind.