The transition from the "bump-and-run" era of robotic vacuums to the current age of autonomous spatial awareness marks one of the most significant leaps in domestic technology. In the early 2000s, these devices were little more than motorized hockey pucks that relied on physical impact to understand their surroundings. Today, the landscape is entirely different. Driven by a global market where the Asia Pacific region dominated with a 44.3% market share in 2021, manufacturers have funneled billions into navigation research. The result is a generation of machines that don’t just clean; they perceive.

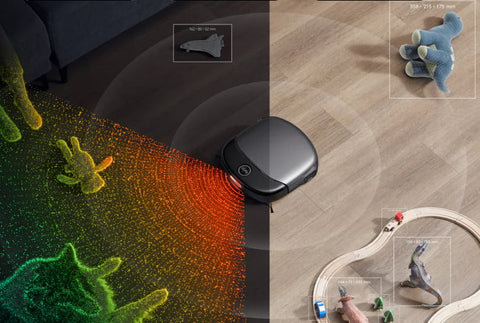

Robot vacuum mapping is the process by which a device utilizes specialized sensors—primarily LiDAR (Light Detection and Ranging), cameras (vSLAM), or gyroscopes—to construct a comprehensive digital layout of your home. This internal blueprint allows the vacuum to plan logical, zig-zag cleaning paths and pinpoint its exact location at all times. This sophisticated orchestration is governed by SLAM (Simultaneous Localization and Mapping), a software framework that enables the robot to build a map of an unknown environment while simultaneously tracking its own trajectory within it.

Core Mapping Technologies Explained

To understand how your robot navigates the labyrinth of chair legs and area rugs, we must look at the "eyes" of the machine. While many entry-level models use basic Gyroscope & Accelerometer Mapping—essentially counting wheel rotations and turns to estimate position—premium models utilize more advanced optical and laser-based systems.

LiDAR (Light Detection and Ranging)

LiDAR is widely considered the gold standard for precision. A LiDAR-equipped vacuum features a rotating laser turret (often visible as a small "hat" on top of the unit) that emits thousands of laser pulses per second. By measuring the "Time of Flight" (the time it takes for each pulse to bounce off a wall or piece of furniture and return to the sensor), the robot calculates distances with millimeter precision. This technology allows the vacuum to "see" in total darkness, as it does not rely on ambient light to function.

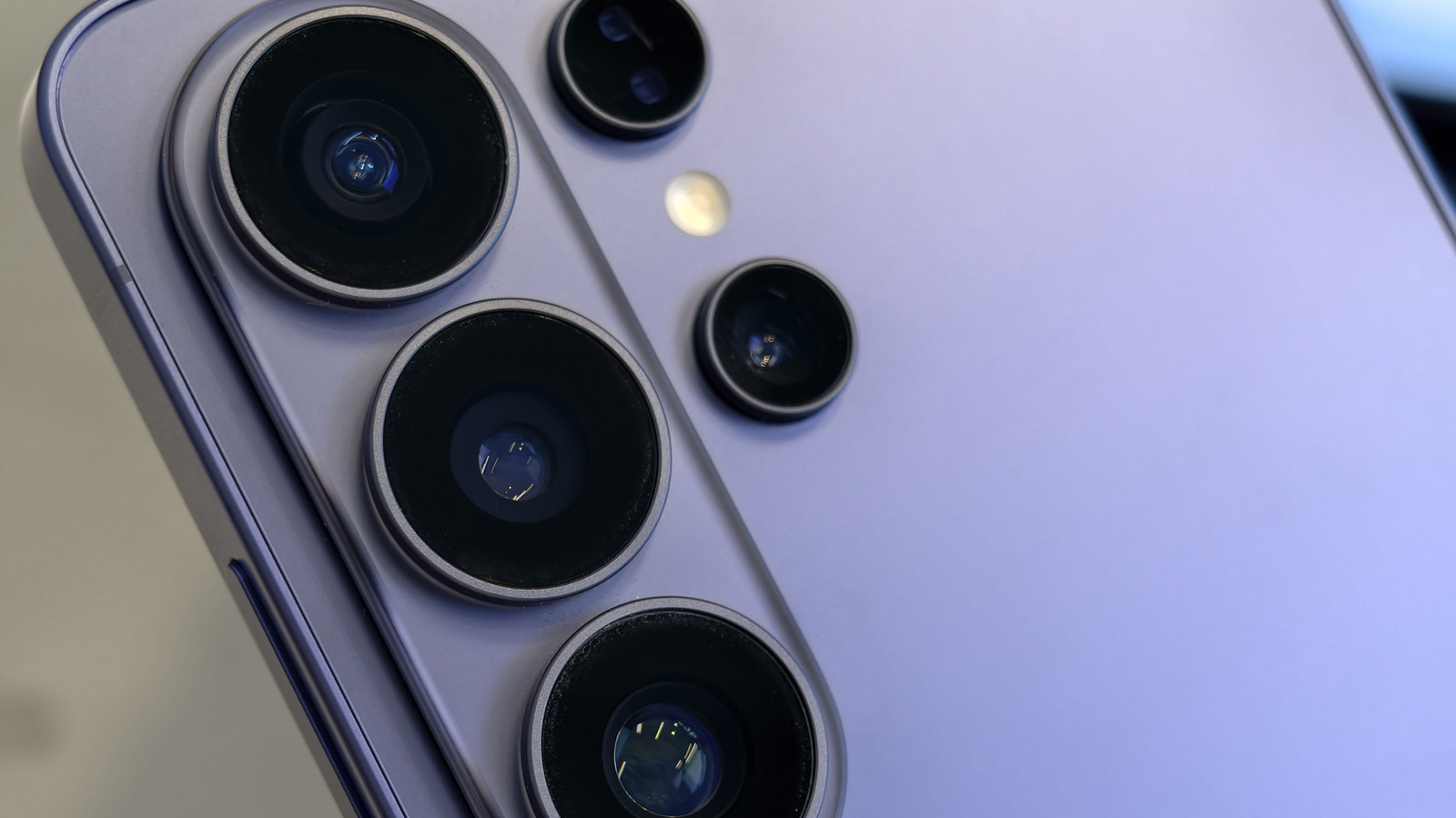

vSLAM (Visual SLAM)

Unlike the laser-focused approach of LiDAR, vSLAM relies on a high-resolution camera and complex AI algorithms. The camera captures images of the ceiling, walls, and distinct landmarks (like the edge of a sofa or a picture frame). The vacuum’s processor then identifies "feature points" in these images to triangulate its position. While vSLAM can struggle in pitch-black rooms, it excels at semantic recognition—understanding not just that an object is in the way, but what that object is.

The Software Brain: SLAM

Whether the input is light pulses or pixels, the data is processed via SLAM (Simultaneous Localization and Mapping). Think of SLAM as the vacuum’s internal cartographer. It solves a classic "chicken and egg" problem: the robot needs a map to know where it is, but it needs to know where it is to build the map. By constantly updating its probability of location based on new sensor data, the robot ensures that the digital map remains accurate even if you move a chair or close a door.

LiDAR vs. vSLAM: Which Navigation is Best for Your Home?

Choosing between these two technologies often depends on your home’s layout and your specific cleaning habits. As a critic who has tested dozens of these units, I find that the "best" technology is the one that minimizes human intervention.

| Feature | LiDAR Navigation | vSLAM Navigation |

|---|---|---|

| Mapping Speed | Extremely Fast (seconds) | Moderate (requires movement) |

| Low Light Performance | Excellent (Works in the dark) | Poor (Requires ambient light) |

| Object Recognition | Low (Sees obstacles as "blocks") | High (Identifies shoes, cables, etc.) |

| Precision | High (Millimeter accuracy) | Moderate (Dependent on image quality) |

| Profile Height | Taller (Due to laser turret) | Slimmer (Camera is usually flush) |

Accuracy and Precision

In my testing, LiDAR models consistently generate more accurate floor plans on the first pass. Because the laser can "see" through a doorway from across the room, it maps the entire floor plan significantly faster than a camera-based unit, which must physically enter a room to see it.

Lighting Conditions

This is a critical differentiator. If you prefer to run your vacuum at night to wake up to clean floors, LiDAR is the clear winner. A vSLAM vacuum without a dedicated headlamp will likely get "lost" in a dark living room, eventually defaulting to a less efficient "bump" mode or simply returning to its base.

Obstacle Recognition

This is where vSLAM strikes back. Standard LiDAR can tell there is an object on the floor, but it cannot tell the difference between a decorative tassel on a rug and a pile of pet waste. Modern vSLAM systems use AI-powered object avoidance to recognize specific household hazards.

Pricing Trends

Advancements in manufacturing have democratized this technology. While mapping was once a $700+ luxury, the market has matured rapidly. Entry-level mapping models using gyroscopes or basic LiDAR can now be found for as low as $100, making structured cleaning accessible to almost every household.

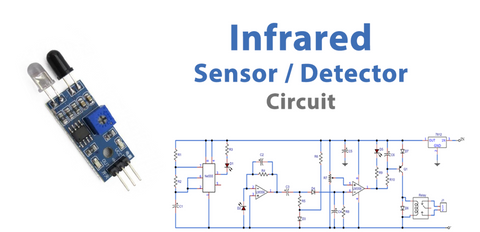

The 'Safety Net': Essential Sensor Types

Mapping is only half the battle. To navigate safely, a robot vacuum utilizes a suite of secondary sensors that act as its "nervous system." Without these, even the most expensive LiDAR unit would be a liability in a multi-story home.

- Cliff Sensors: Located on the undercarriage, these infrared sensors send constant signals to the floor. When the signal doesn't bounce back (indicating a drop), the vacuum instantly halts, preventing a catastrophic fall down the stairs.

- Bump & Wall Sensors: These proximity sensors allow the robot to slow down before making contact with walls. This ensures a "soft touch" that protects your baseboards and furniture while allowing the side brushes to clean right up to the edge.

- Optical Encoders: Integrated into the wheels, these sensors measure exactly how many rotations a wheel has made. This data is used to verify the vacuum's movement against its internal map, ensuring that wheel slippage on a slick floor doesn't throw off the navigation.

How to Create the Perfect Map for Your Robot

The efficacy of your vacuum is only as good as the map it creates. To get the most out of a high-end mapping unit, you need to facilitate a clean "Mapping Run."

- Prepare the Environment: Before the first run, clear the floor of "robot traps"—loose charging cables, thin tassels on rugs, and small toys. Open all internal doors to allow the robot full access.

- The Mapping Run: Most modern robots offer a "Quick Mapping" mode. In this mode, the robot doesn't vacuum; it simply cruises through the home to build its initial blueprint. This is faster and preserves battery.

- Customizing the Layout: Once the map is generated in the app, you can merge or divide rooms, name them (e.g., "Kitchen"), and most importantly, set No-Go Zones.

For those with multi-story homes, modern premium vacuums are now capable of storing between 3 to 5 unique floor plans. When you carry the robot to the second floor, it recognizes its surroundings and automatically loads the correct map, complete with your saved virtual walls and restricted areas.

Insider Tip: Maintenance Matters The most common cause of "mapping drift" or navigation errors isn't a software bug—it's dust. Periodically wipe the LiDAR "eye" and the cliff sensors with a clean, dry microfiber cloth. A smudge on a cliff sensor can make the robot think it’s perpetually on the edge of a cliff, causing it to refuse to move.

Troubleshooting Common Mapping Issues

Even the most advanced SLAM algorithms can stumble. One common issue is the "Ghost Room," where a robot sees a large mirror or a floor-to-ceiling window and interprets the reflection as an entirely new room. If this happens, use the app to place a Virtual Wall over the mirror to prevent the robot from trying to "clean" the reflected space.

Another frequent problem is "Map Rotation." This occurs when the wheels slip (often on a wet floor or a high-pile rug), causing the robot to think it has turned 90 degrees when it hasn't. The resulting map will look skewed or overlapping. If your map looks like a piece of abstract art, the best solution is usually to delete the map and start a fresh mapping run after ensuring the floors are dry and clear.

FAQ

Can a robot vacuum map my home in the dark? Yes, if it uses LiDAR technology. LiDAR uses its own laser light source and does not need ambient light. However, vSLAM (camera-based) models require a well-lit room to navigate accurately, though some newer models include a built-in LED light to assist in the dark.

Do robot vacuums send images of my home to the cloud? Most reputable brands encrypt mapping data. For vSLAM models, the "images" are often converted into mathematical feature points locally on the device rather than being stored as actual photos. If privacy is your primary concern, look for models with TÜV Rheinland privacy certification.

What happens if I move my furniture? Modern SLAM-enabled vacuums are dynamic. If you move a coffee table, the robot will detect the change during its next cleaning cycle and update the map accordingly. If you move a large number of items, a quick re-map might be more efficient.

Experience the Future of Clean

The evolution of robot vacuum mapping has turned a novelty gadget into a mission-critical home appliance. By understanding the nuances between LiDAR’s precision and vSLAM’s intelligence, you can select the tool that best fits your architectural reality. Whether you have a sprawling multi-story estate or a compact urban apartment, there is a mapping solution designed to take the "chore" out of floor care.